Publications

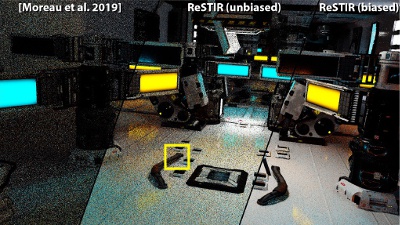

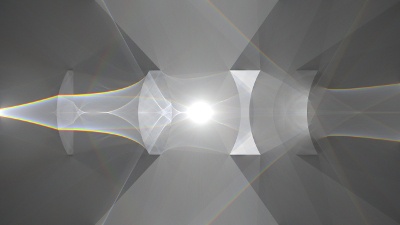

Spatiotemporal reservoir resampling for

real-time ray tracing with dynamic direct lighting

Efficiently rendering direct lighting from millions of dynamic light sources using Monte Carlo integration remains a challenging problem, even for off-line rendering systems. We introduce a new algorithm—ReSTIR—that renders such lighting interactively, at high quality, and without needing to maintain complex data structures. We derive an unbiased Monte Carlo estimator for this approach which achieves equal-error 6×-60× faster than state-of-the-art methods, and a biased estimator that is 35×-65× faster, at the cost of some energy loss.

ACM Transactions on Graphics (Proc. of SIGGRAPH), 2020

Selectively Metropolised Monte Carlo

light transport simulation

Light transport is a complex problem with many solutions. Practitioners are now faced with the difficult task of choosing which rendering algorithm to use for any given scene. We use a simple transport method (e.g. path tracing) as the base, and treat high variance “fireflies” as seeds for a Markov chain that locally uses a Metropolised version of a more sophisticated transport method for exploration, removing the firefly in an unbiased manner. Through careful design choices, we ensure our algorithm never performs much worse than the base estimator alone, and usually performs significantly better, thereby reducing the need to experiment with different algorithms for each scene.

ACM Transactions on Graphics (Proc. of SIGGRAPH Asia), 2019

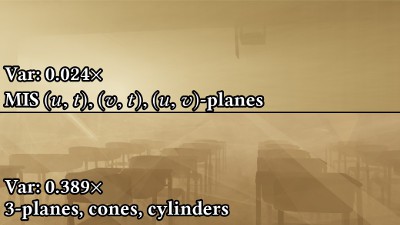

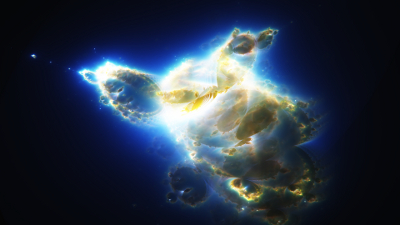

Photon surfaces for robust, unbiased

volumetric density estimation

We generalize photon planes to photon surfaces: a new family of unbiased volumetric density estimators which we combine using multiple importance sampling. These estimators allow us to handle light paths not supported by photon planes, including single scattering, and surface-to-media transport. More importantly, since our estimators have complementary strengths due to analytically integrating different dimensions of the path integral, we can combine them using multiple importance sampling.

ACM Transactions on Graphics (Proc. of SIGGRAPH), 2019

A radiative transfer framework

for non-exponential media

We develop a new theory of volumetric light transport for media with non-exponential free-flight distributions. Our theory formulates a non-exponential path integral as the result of averaging stochastic classical media, and we introduce practical models to solve the resulting averaging problem efficiently. We address important considerations for graphics including reciprocity and bidirectional rendering algorithms, all in the presence of surfaces and correlated media.

ACM Transactions on Graphics (Proc. of SIGGRAPH Asia), 2018

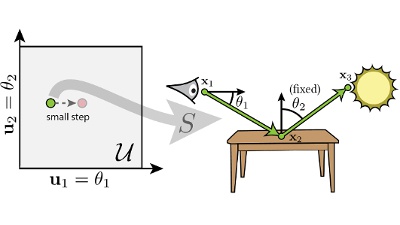

Reversible Jump Metropolis Light Transport

using Inverse Mappings

We study Markov Chain Monte Carlo (MCMC) methods operating in primary sample space and their interactions with multiple sampling techniques. We introduce a novel perturbation that can locally transition between sampling techniques without changing the geometry of the path. We combine this idea with the Reversible Jump MCMC framework to obtain an unbiased algorithm, which we call Reversible Jump MLT (RJMLT) that shows improved temporal coherence, decrease in structured artifacts, and faster convergence.

ACM Transactions on Graphics (ToG), 2017

An Efficient Denoising Algorithm for

Global Illumination

We propose a hybrid ray-tracing/rasterization strategy for real- time rendering enabled by a fast new denoising method. We factor global illumination into direct light at rasterized primary surfaces and two indirect lighting terms, each estimated with one path- traced sample per pixel. Our factorization enables efficient (biased) reconstruction by denoising light without blurring materials.

Proceedings of High Performance Graphics, 2017

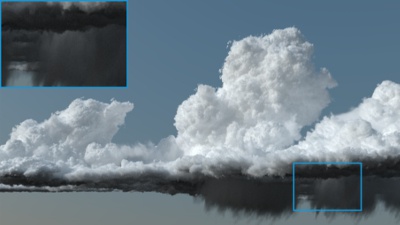

Beyond Points and Beams: Higher-Dimensional

Photon Samples for Volumetric Light Transport

We develop a theory of volumetric density estimation which generalizes prior photon point and beam approaches to a broader class of estimators using “nD” samples along photon and/or camera subpaths. Our theory shows how to replace propagation distance sampling steps across multiple bounces to form higher-dimensional samples such as photon planes, photon volumes and beyond.

ACM Transactions on Graphics (Proc. of SIGGRAPH 2017)

Nonlinearly Weighted First-order Regression

for Denoising Monte Carlo Renderings

We address the problem of denoising Monte Carlo renderings by studying existing approaches and proposing a new algorithm that yields state-of-the-art performance on a wide range of scenes. We analyze existing approaches from a theoretical and empirical point of view. The observations of our analysis instruct the design of our new filter that offers high-quality results and stable performance.

Computer Graphics Forum (Proc. of EGSR 2016)

A Practical and Controllable Hair and Fur

Model for Production Path Tracing

We present an energy-conserving fiber shading model for hair and fur that is efficient enough for path tracing. Our model adopts a near-field formulation to avoid the expensive integral across the fiber, accounts for all high order internal reflection events with a single lobe, and proposes a novel, closed-form distribution for azimuthal roughness based on the logistic distribution.

Computer Graphics Forum (Proc. of Eurographics 2016)

Portal-Masked Environment Map Sampling

In this paper, we introduce a new algorithm for importance sampling environment lighting in indoor scenes. We make use of portals to importance sample the product of visibility and environment lighting. The resulting algorithm is easy to implement and yields considerable improvements over traditional environment map sampling.

To appear in Computer Graphics Forum (Proc. of EGSR 2015)

Informed Choices in Primary Sample Space

In this thesis, we investigate mappings from primary sample space to path space and their inverses. We describe how to construct inverses of several path sampling techniques employed in graphics, and use these inverses to create two new perturbations for Multiplexed Metropolis Light Transport.

Master Thesis, August 2015 (released March 2017)

BSSRDF Explorer

An interactive GPU accelerated manipulation tool for bidirectional subsurface scattering distribution functions (BSSRDF) in the spirit of the BRDF Explorer. This project was implemented as part of my Bachelor's thesis at Disney Research Zurich.

Bachelor Thesis, April 2013

Projects

Tungsten Renderer

An open source physically based renderer written in C++11. Features state of the art material models and importance sampling techniques. Optimized for multicore SIMD hardware.

Originally created for a university competition, Tungsten is now taking up most of my free time and is still in development.

Tantalum Renderer: 2D Light Transport

An open source physically based GPU 2D renderer written in JavaScript and WebGL.

Created for fun and out of personal interest.

Virtual Femto Photography

A 2D implementation of transient rendering.

Created for fun and out of personal interest.

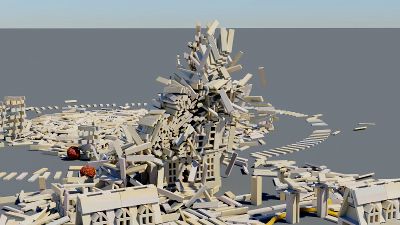

Rigid Body Simulator

An offline rigid body simulator with stable stacking, resting contacts, friction, soft constraints and flexible rods. Optimized for multicore SIMD hardware.

Used to create the short film "Planky with a chance of Meatballs" in collaboration with Antoine Milliez. Received Jury award and Audience award for best project at the PBS competition at ETH Zurich.

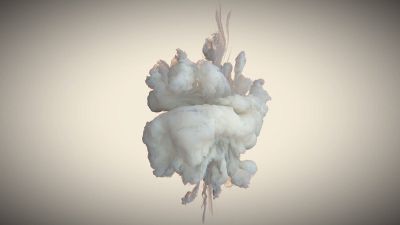

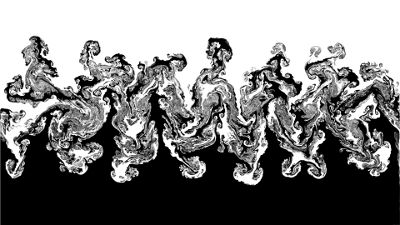

GPU Milkdrops

An offline vorton fluid simulator and volumetric path tracer implemented completely on the GPU.

Implemented mainly for fun and out of personal interest, it is used to simulate and render high-resolution milk drop collisions.

In 2013 I squeezed the code into 4kb of binary and submitted it to Demodays, where it won 2nd place in the "4k Executable Graphics" competition.

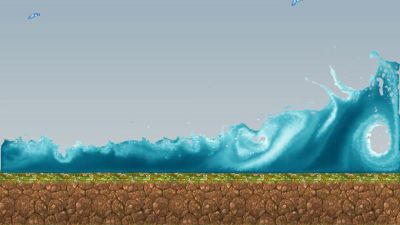

2D Smoothed Particle Hydrodynamics

A robust, real-time CPU implementation of smoothed particle hydrodynamics (SPH) in 2D. Supports up to 70'000 particles at 60 frames per second.

Rendering is performed on the GPU using anisotropic gaussian splats as well as an aeration/diffusion model to render air bubbles transported with the fluid.

Implemented for the puzzle game "Bring Back Winter".

Star Stacker

A project on enhancing low quality night sky images using computer vision.

Using a combination of ensemble models, blob transforms, feature tracking and non-linear optimization, we can remove light pollution, extract stars, track constellations and align multiple poor quality images to produce a single high-quality one.

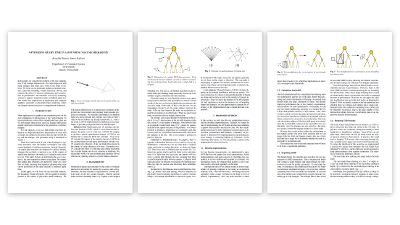

BVH Analysis

An extensive performance analysis of different bounding volume hierarchy (BVH) data structures, done in collaboration with Simon Kallweit.

We implemented various BVHs and optimized BVH traversal using different techniques such as SIMD vectorization, alignment, reordering for improved cache hit rate, sorting of input rays and more. We benchmarked and analysed the resulting code to get an estimate of which techniques perform well in pactice.

We summarized our results in a scientific report, which is of interest to anyone implementing high-performance applications that rely on fast ray-primitive intersection.

GPU-Fluid

Open source, offline GPU fluid solver featuring fluid implicit particle, preconditioned conjugate gradient with the incomplete poisson preconditioner and 3rd order Runge-Kutta advection on a MAC grid. Implemented completely on the GPU via stream compaction and histopyramids.

Created as an exercise in GPGPU and efficient parallel stream compaction, it now lives on github.

Incremental Fluids

An open-source tutorial series/reference implementation for Eulerian fluid solvers, intended as a tool for others to learn computational fluid dynamics.

Tensile

Real-time computer generated short film implemented in 64kb of binary, in collaboration with Simon Kallweit and Vitor Bosshard. Won 2nd place in the "Real-time size limited" competition at Revision 2013.

I contributed a procedural mesh generation pipeline including robust voronoi fracturing, procedural trees and procedural interlocking axle/gear mechanisms. Additionally, I implemented the rendering pipeline and post processing stack.

Wheels Within Wheels

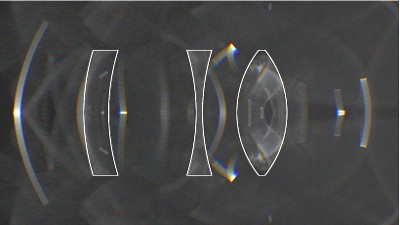

Real-time computer generated short film implemented in 64kb of binary, in collaboration with Simon Kallweit and Vitor Bosshard. Won 1st place in the "Real-time size limited" competition at Demodays 2012.

I contributed a full HDR post processing pipeline including deferred shading, motion blur, depth of field, god rays, bloom and more. I also created part of the procedural content (everything involving cogs, gears, and infinite zoom).

Bismuth Compiler

A BASIC-like language and compiler, developed using Flex and Bison. Features classes with inheritance, method overloading, a module system and interfaces to C/++.

The language was originally developed as a modern alternative to popular BASIC-like languages (BlitzBasic, FreeBasic, etc.). The compiler was partially self-hosting and fully functional, but unfortunately due to time constraints I had to abandon the project before I was able to build a stable ecosystem.

Sparse Voxel Octrees

An open-source, real-time, multi-threaded CPU implementation of Sparse Voxel Octrees. Includes robust octree generation capable of handling out-of-core construction.

Originally created out of personal interest, it now lives on github.

Histogram-Preserving Tiling

A WebGL implementation of the paper "On Histogram-preserving Blending for Randomized Texture Tiling"